UCLA MAE Assistant Professor M. Khalid Jawed researches a data-driven approach to modeling the mechanics of structures and fluid-structure interaction using robotics, automation, computation, and machine learning.

What was your journey from Dhaka, Bangladesh to UCLA?

As I was growing up, I was very interested in aerospace—airplanes, spacecraft, and such. In that time in Bangladesh, I don’t think there was any degree granting institution that would give you a degree in aerospace, so I moved to the University of Michigan as an undergraduate where I majored in aerospace and physics. That was one of the main reasons for the move—to study the science behind airplanes and spacecraft. My specialization as an undergraduate was structural and solid mechanics where we studied the science behind different materials and structures. I got very interested in that domain and I studied that at MIT, where I earned my masters [degree] and PhD. Then I did a brief postdoc at Carnegie Mellon University where I applied my knowledge of solid and structural mechanics in designing soft robots, and this is where the combination of mechanics and robotics came into my life. When I moved on to UCLA and got to start my own lab, this is what we focused on. Currently, we are studying mechanics primarily in the context of robotics. It all started with my interest in aerospace that led me to move to the US East Coast; then finally, I moved here.

What is your impression so far of Los Angeles, UCLA, coming out here? Did you first come to LA when you came to interview here, or have you been down here before?

Our collaborator was at Caltech when I was at Carnegie Mellon, so I visited Caltech then. I have been here a few times, but only to visit for a few days.

What I really like about LA is that we are so close to the relevant industries. It’s close to Hollywood which also helps in that some of the technology and numerical simulations that we use in our lab are also used in movies. When I was a PhD student, one of my thesis committee members was a computer graphicist. Being close to the aerospace industry as well as the animation industry helps. I also just like the weather here; I think it keeps the people more productive, and I also think it attracts very good students to UCLA. The quality of the undergraduate and graduate students is just phenomenal. I am very fortunate to have such amazing graduates and undergraduates who genuinely helped me build the lab and push the enterprise. Overall, I think moving to LA was a very good decision.

Please explain for a layperson what “Learning Mechanics from Machines” means.

By machines we mean robots and computers. Now, let’s talk about robots. You can do experiments and get data to learn about mechanics. It would be great if a robot could do a lot of the experiments for you because first, the quantity of the data will be higher, and secondly, the data would be of better quality. Let’s move on to computers. Say you want to study a structure that you can’t really build—maybe something very complicated that takes a long time to build. Say we want to design a soft robot. It’ll be much easier for us if we could simulate its behavior in a computer without building it and find out whether it will be able to do the job for us or not, and then ultimately build it. That is what we mean by “Learning Mechanics from Machines”.

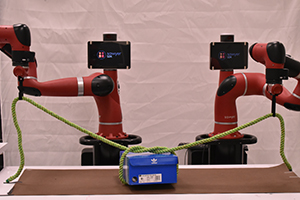

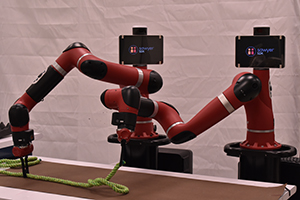

We focus on robotic manipulation—how a robot can manipulate clothes, ropes, and other structures—that can give us experimental data from robots. More importantly, it can be used in the real world as well; you can imagine an assistive robot helping you fold clothes. When it comes to computers, now that we have faster and smarter computers, we could design better structures in the computer before building it, which would be beneficial. Say, we are talking about soft robots—robots that have certain parts that are soft, which makes them inherently safe to work with humans. Traditionally, even today, if you want to build a soft robot or even a soft-robotic gripper, that is done by a crude trial-and-error method. You build something, it doesn’t work, and you have to try something else that might not work as well. You do iteration after iteration which takes up a lot of time and money. We are trying to remove, largely, this trial-and-error step, and come up with a computational framework for soft robots that will give you the design that you can just build. We are still pretty far from there as of yet—it will take some time. There will always be a couple of iterations, but we are trying to cut down the number of iterations that you would need to do.

For the trial-and-error, it would all just be math, and from there you would not need to build any physical models until you have the correct simulation. You just have algorithms and you are having them run and run to get it right. Is this correct?

Yes, and more importantly to get it to be the best. There might be a couple designs that will work, but we have to find which one will be the best; for example, which robot will be the fastest, which one will use the least amount of energy, and so on. These are some of the questions that we address. In that regard, there has been a sort of revolution in artificial intelligence and machine learning in developing methods for optimization and design, and we borrow these methods from these disciplines and use them in our field of mechanics to build and design better structures.

What are the practical applications of your research that the average person would see in their everyday life? One practical application of your research would obviously be to speed along the design process. I assume the average person will see concept designs come to the market/world faster since less models have to be built. Would that be correct?

I believe so. We can talk about a few direct practical applications. For example, let’s consider folding clothes—something people do in their everyday life. Why is it so difficult for robots to do it? The reason is, when you are folding clothes, you have to predict the deformation of the structure. Humans subconsciously know how their clothes, which is a very flexible structure, will deform as you are manipulating it. The same goes for tying a shoelace. A human can tie a shoelace easily in a couple seconds. We think that robots should be able to predict these kinds of deformations or that they should have this knowledge of mechanics before it can seamlessly manipulate these flexible structures such as clothes and ropes. That would be one direct application. We can think about soft robots as well. Let’s say that we want to design a gripper that will pick up fruit. If the gripper is made of a very rigid material, it may damage the fruit while picking it up. Creating an effective gripper would be a second practical application. A third would be deployables. You can have an antenna that is in a spacecraft that goes up and deploys this structure. We are looking into structures that will be fully flexible yet deployable, a material that when put in your hands, can be crumpled, and when unfolded, it can take a spherical shape for example. We are trying to engineer structures that take the prescribed shape I want but is flexible like a towel or paper. These are probably the main direct applications that we are looking into. In a scholarly point of view, you are right in that we are trying to use the abundance of computational power that we have to quickly prototype and design structures that can go from a lab to the real world sooner.

On the topic of artificial intelligence, are we headed into a future where we will one day will have autonomous machines that will be able to replicate and maintain themselves? Maybe even evolve and improve on their own? For example, a robot-run warehouse that has shipping robots that ship out online package orders. Within that warehouse there are repair-bots that repair the shipping-bots, so there are no human interactions. A self-contained society of autonomous machines within a warehouse. If the machines can repair themselves, surely they can replicate the parts by machining them. Is this possible?

A human can develop an algorithm that he/she will give this robotic system that will further their [the robot’s] autonomy. For example, I can teach a robot simple rules so that if two robots are put side by side, and one robot’s temperature increases beyond 70 degrees Centigrade, the second robot switches it [the other robot] off. This is a simple example of one helping another. Once the robot has cooled down, it will regain functionality, and this, in a simple sense, is a repair as well. I can see a few decades down the line, a cyber-physical system that is fairly autonomous as in you can give it a number of parts—raw materials—and there will be fabrication of parts and assembly to make the system functional. Almost a hundred percent of these steps will be performed by robots; however, I cannot see a future where robots are truly intelligent like Skynet—anywhere close to humans at all. I think we have gotten used to overselling the capabilities of robots and that has led to misconceptions of doomsday scenarios.

What about the opposite of a doomsday scenario? What about if you were able to create autonomous robots that are inherently good? They would not be programmed to do bad things. What if robots were more autonomous and acted as society’s helpers—for example, work in hundred twenty degree weather? I am mainly talking about a massive overhaul of the workforce by autonomous robots that could repair themselves.

I think in part, yes. We can program them in such a way that they will be able to partially repair themselves. If we think about one extreme where, for example, a robot was completely destroyed by a fire, then other robots would not be able to go to an iron mine and dig up iron, make steel—that is a long way from where we are now. However, autonomy of robots is a very cool thing. It really helps to advance human society. It can greatly change the way people work in factories and make their lives much better. Especially when we talk about humans and robots working side by side without one completely replacing the other, we realize there is a lot of potential. We can also think about our day to day life where having an autonomous robot would help. An example would be folding clothes or sweeping your house or cooking. Cooking is a very complex process; cutting up the ingredients, putting them together—these are complex mechanical processes. While humans are very good at it, robots would need to learn how to properly do these things.

Where do you see your research in twenty five years in terms of not just individually but in working with other faculty? And how will it impact the world? Think about the world of 2044.

I would say that we should have a lot more robots. It is similar to smartphones and electrical appliances. I would like to think that every house will have one or two robots or robotic arms that will help them in their daily chores in a very safe way. I also think when it comes to mechanical robots and so on, there will be innovation that will help us build robots that are so small and autonomous that it can swim through your blood and maybe deliver drugs or fix something within the body. As we design better and simpler robots, we need to find ways to manufacture them at that small scale. Then, we are good to go; we don’t have to cut open any human bodies to fix something. A lot of problems can be potentially fixed using very tiny robots. I would like to highlight the difference in scales. One class of robots that will help you fold clothes will be a meter, few meters in size, whereas the other class of robots used in drug delivery would be micrometers in size. In between these scales, there are plenty of opportunities, particularly in agriculture, assistive robots in factories, and so on that can really change the landscape of how we live and operate.

Interview by Alex Duffy. Images from Prof. Jawed’s lab.